The Shift to Agentic AI Platforms

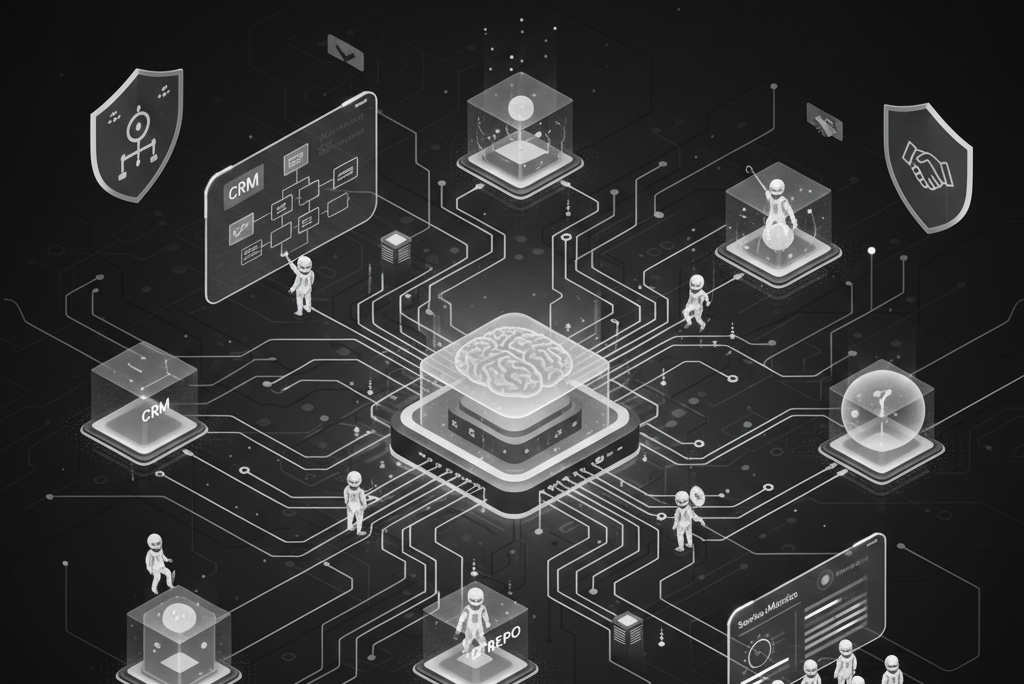

We are moving past the era of “chatbots that answer questions.” The next evolution of business infrastructure is Agentic AI—autonomous systems that don’t just talk, but plan, act, and improve.

For engineering leaders and architects, this represents a new functional stack. It’s a world where agents, tools, and APIs work together to write code, wire services, and drive end-to-end workflows. In this post, we’ll unpack what agentic AI really means, how autonomous dev tools fit in, and how to design production-grade automations that don’t turn into “black boxes.”

What is an Agentic AI Platform?

An Agentic AI system is a program that uses reasoning to achieve high-level goals. Instead of a single LLM behind a chat window, you get a set of specialized agents capable of:

-

Goal Decomposition: Breaking down a goal like “reduce onboarding time by 30%” into a series of logical tasks.

-

Active Tool Use: Calling APIs to read and write to external systems like your CRM, Git, or CI/CD pipelines.

-

Self-Correction: Learning from the outcome of an action and adapting the plan if it fails.

-

Human Coordination: Knowing exactly when to pause and ask for a human approval when confidence is low.

The Core Components

Modern platforms provide the “connective tissue” for these agents:

-

State Management: Handling memory and context over long-running processes.

-

Tool Integration: Standardized connectors for databases, SaaS, and internal queues.

-

Scalable Runtime: The ability to run dozens or hundreds of agents concurrently.

-

Guardrails: Policy-based controls that ensure an agent can’t go “rogue.”

From Assistants to Autonomous Dev Tools

In engineering, we are seeing the rise of autonomous dev tools. These are more than just IDE plugins; they are software robots that operate over your entire codebase and infrastructure.

While a traditional assistant might suggest a snippet of code, an Agentic Dev Tool can:

-

Perform deep refactoring across multiple services.

-

Triage a regression test and suggest a fix.

-

Manage a canary rollout via Kubernetes APIs.

-

Ask for a human review only before the final “merge.”

Architecture: Agents, Tools, and APIs

At the heart of the system is the Tooling Layer. This is the set of functions an agent is allowed to call.

Example: A Tooling Layer in Python

Here is how you might expose business and development tools to an agent via a structured interface. This makes the actions predictable and type-safe:

import requests

from typing import TypedDict

# --- Business tools ---

class CreateTicketInput(TypedDict):

title: str

description: str

severity: str

def create_incident_ticket(data: CreateTicketInput) -> dict:

resp = requests.post(

"https://ticketing.internal/api/incidents",

json=data,

timeout=5,

)

resp.raise_for_status()

return resp.json()

# --- Dev tools ---

def open_pull_request(branch: str, title: str, body: str) -> dict:

resp = requests.post(

"https://git.internal/api/pr",

json={"branch": branch, "title": title, "body": body},

timeout=5,

)

resp.raise_for_status()

return resp.json()

# The Dictionary exposed to the Agent's reasoning engine

TOOLS = {

"create_incident_ticket": create_incident_ticket,

"open_pull_request": open_pull_request,

}

Orchestrating a “Refactor & Rollout” Agent

Imagine an agent tasked with: “Migrate all internal services using v1/orders to v2/orders with zero downtime.” Its workflow would look something like this in a production runtime:

-

Discovery: Search the codebase to build an inventory of affected services.

-

Planning: Generate migration steps and identify required tests.

-

Execution: Open PRs and trigger CI pipelines.

-

Verification: Monitor metrics and rollback automatically if errors spike.

Designing APIs for “Agentic Consumption”

To work well with AI, your APIs need to be machine-friendly, not just human-readable.

-

Narrow Actions: Agents perform better with specific tools (e.g.,

update_shipping_address) than generic “catch-all” endpoints. -

Idempotency: Because agents might retry an action if a network blip occurs, write operations must be safe to repeat.

-

Semantic Errors: Instead of a generic

500error, return a clear reason like: “Validation failed: Missing zip code.” This allows the agent to fix the input and try again.

Governance and Control

The ability to act is also a risk. Governance should be “Day 1” design, not an afterthought:

-

Scoped Access: A “Dev Agent” should never have access to the payroll tool.

-

Policy-as-Code: Use engines (like OPA) to enforce rules, such as “No production deploys without human approval.”

-

Auditability: Log every decision, tool call, and result. You should always be able to ask: “Why did the agent do that?”

A Pragmatic Path Forward

Don’t hand over the keys to the kingdom immediately. Start small:

-

Read-only: Let agents observe and recommend.

-

Drafting: Let them create tickets or PRs that require a human to “click go.”

-

Low-risk Automation: Automate simple tasks like sandbox cleanup or notifications.

-

Full Autonomy: Scale to high-trust workflows once your guardrails are proven.

Agentic AI isn’t about replacing teams—it’s about moving the routine coordination work to software, freeing your engineers to focus on strategy and complex judgment.